What is Blast Radius? How to Strategically Reduce It?

March 10, 2026

In recent years, as systems have grown larger and dependencies more complex, the term "blast radius" has become increasingly common in discussions about backend system design, DevOps, and Site Reliability Engineering (SRE).

For those outside the software engineering field, "blast radius" intuitively refers to the physical extent of a bomb's explosion. But what does it mean in the context of software engineering? Why is this concept important to engineers? And what methods can reduce the scope of incident impact? In this article, we'll explore this frequently heard term and the issues surrounding it.

What is Blast Radius?

In software engineering, writing code is just one piece of the puzzle. Ensuring that software reliably delivers new features to users requires attention to multiple aspects. Breaking this down by lifecycle stages: early on, we can reduce incident likelihood through testing and similar measures; later, we can accelerate recovery through rollback and other techniques.

Beyond the pre- and post-incident phases, there's another important consideration: during an incident, limiting its scope. This is where the term "blast radius" comes in. Blast radius refers to the range of impact when an incident occurs.

For example, if a bug is discovered after a feature goes live and it only affects one page, the blast radius is relatively small. However, some incidents can impact multiple pages within a single product, or span across multiple products entirely.

In recent years, many notable incidents have demonstrated blast radius at a global scale. In 2025, the Cloudflare global outage in November stands out as particularly memorable—it rendered more than half of the world's websites unavailable and unusable. This represents a blast radius so large that most software teams do everything they can to prevent it.

Since incidents are inevitable, and despite even the best testing practices, modern software systems have numerous dependencies. When a dependency fails, even if your team's portion is working correctly, incidents remain unavoidable. In 2024, Azure's West US data center experienced a major power outage. Since Vercel's deployment platform partially depended on Azure's services, when Azure's data center went down, Vercel became unusable despite having done nothing wrong on their end (link). In 2026, due to geopolitical conflicts, an AWS data center in the Middle East was affected, forcing a complete shutdown due to fire. These kinds of unforeseen events are difficult to anticipate.

Facing these unpredictable incidents, software teams cannot simply rely on good testing. Instead, they must think about how to prevent incidents from spreading when they occur, and how to ensure users can continue using the software product even when technical failures happen. In other words, effectively limiting blast radius is a challenge every software team must address.

Concrete Methods to Reduce Blast Radius

Now that we understand what blast radius is, many readers likely wonder: "What methods can effectively reduce blast radius?" In other words, when failures occur, how can teams prevent their impact from expanding?

PlanetScale, a globally-scaled database platform, discusses three core principles for extreme fault tolerance in their article "The principles of extreme fault tolerance": isolation, redundancy, and static stability. Let's examine how teams can implement each principle.

Isolation

When discussing how to limit blast radius, most people's first instinct is to use isolation techniques that confine impact to specific areas.

In the software industry, many companies draw inspiration from shipbuilding practices. Modern ships are designed with bulkheads that divide the hull into separate compartments that don't interconnect. With this design, if a hull breach occurs, water only enters the damaged compartment and doesn't affect others. At the same time, the structure of other compartments ensures the ship remains stable, preventing a single compartment's flooding from sinking the entire vessel.

Applying this concept to software engineering leads to what's commonly called the Bulkhead Pattern. For instance, Microsoft Azure recommends that architects consider the bulkhead pattern when designing architectures (link) to localize failures.

Some approaches refer to this pattern as Cell-based Architecture. The name reflects the idea of replicating an entire system into multiple isolated, self-contained cellular units, each capable of independently handling traffic and state. When one unit fails, the impact remains confined to that unit rather than bringing down the entire system.

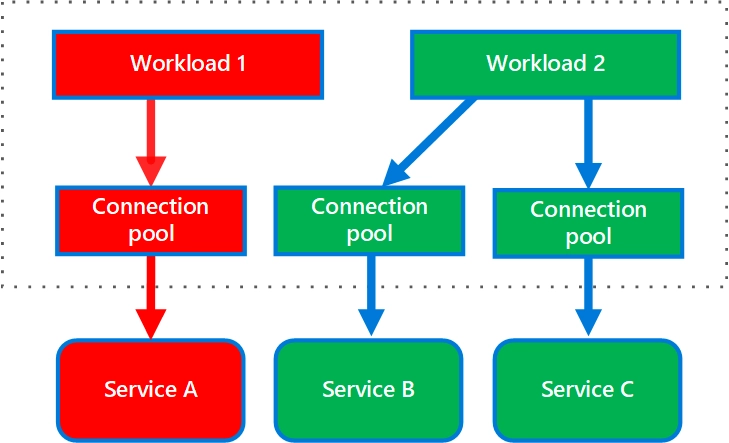

The diagram below shows Azure's recommended bulkhead pattern design. By separating services A, B, and C into three independent entities, if service A goes down today, the blast radius is limited to A without affecting services B and C.

Recall earlier we mentioned that Vercel had practiced seamless migration from Azure's West US region to East US in case of failure. This capability relies on a fundamental assumption: West US and East US regions are isolated, ensuring that one region's failure doesn't affect the other.

In fact, not just Azure—all major cloud providers fundamentally use this "shared nothing" architecture, ensuring that incidents in one region affect only that region. AWS experienced an incident in the us-east-1 region in 2025. While the incident's impact was significant (because many companies deploy resources in that region), it remained contained within that region without spreading to AWS's other regions. This containment was possible precisely because AWS had previously ensured computing and storage resources in each region were independent.

Redundancy

The isolation discussed earlier inherently implies redundancy. Redundancy means that even though some machines aren't actively being used, the system keeps them on standby. When an incident occurs, the system can immediately switch to these standby machines, ensuring that a single machine's failure doesn't render the entire system inoperable.

Redundancy typically works in conjunction with routing. When a primary dependency fails, routing can quickly redirect traffic to a standby component on the sidelines. In English, this switching process is commonly called "failover." In practice, many companies conduct regular drills to ensure that if a critical dependency fails, switching to the redundant system proceeds smoothly.

Vercel previously published an article titled "Preparing for the worst: Our core database failover test" discussing how they implement this in practice. It's highly recommended reading. In the article, they explain that they began conducting these drills precisely because of the Azure West US incident mentioned earlier. Although Vercel had redundancy in the East US region available at that time, because they had previously neglected such drills, when the actual failure occurred, they couldn't smoothly switch to the East US region's machines. This experience taught Vercel's team the importance of regular practice, ensuring that when incidents do happen, they don't panic and can respond effectively.